Intelligence quotient

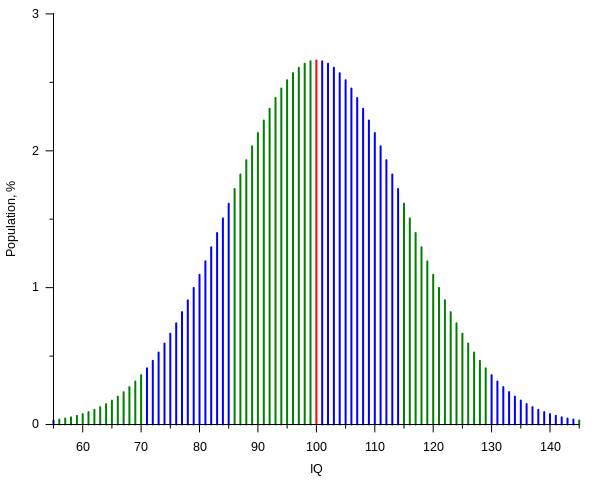

An intelligence quotient, or IQ, is a score derived from one of several standardized tests designed to assess intelligence. The abbreviation "IQ" comes from the German term Intelligenz-Quotient, originally coined by psychologist William Stern. When modern IQ tests are devised, the mean (average) score within an age group is set to 100 and the standard deviation (SD) almost always to 15, although this was not always so historically.[1] Thus, the intention is that approximately 95% of the population scores within two SDs of the mean, i.e. has an IQ between 70 and 130.

中点是100, σ = 15. 160 = 100 + 4σ

IQ_curve.svg (SVG file, nominally 600 × 480 pixels, file size: 12 KB)

IQ = 160 3万人里就有一个(out of range of ± 4σ: 1 in 15,787。只考虑单边: 1 in 15,787 x 2)!

Higher deviations

Because of the exponential tails of the normal distribution, odds of higher deviations decrease very quickly. From the Rules for normally distributed data:

Thus for a daily process, a 6σ event is expected to happen less than once in a million years. This gives a simple normality test: if one witnesses a 6σ in daily data and significantly fewer than 1 million years have passed, then a normal distribution most likely does not provide a good model for the magnitude or frequency of large deviations in this respect. In The Black Swan, Nassim Nicholas Taleb gives the example of risk models for which the Black Monday crash was a 36-sigma event: the occurrence of such an event should instantly suggest a catastrophic flaw in a model.

选择“Disable on www.wenxuecity.com”

选择“Disable on www.wenxuecity.com”

选择“don't run on pages on this domain”

选择“don't run on pages on this domain”